Category: AI / Machine Learning

KTH 2018 PhD Supervisory Panel

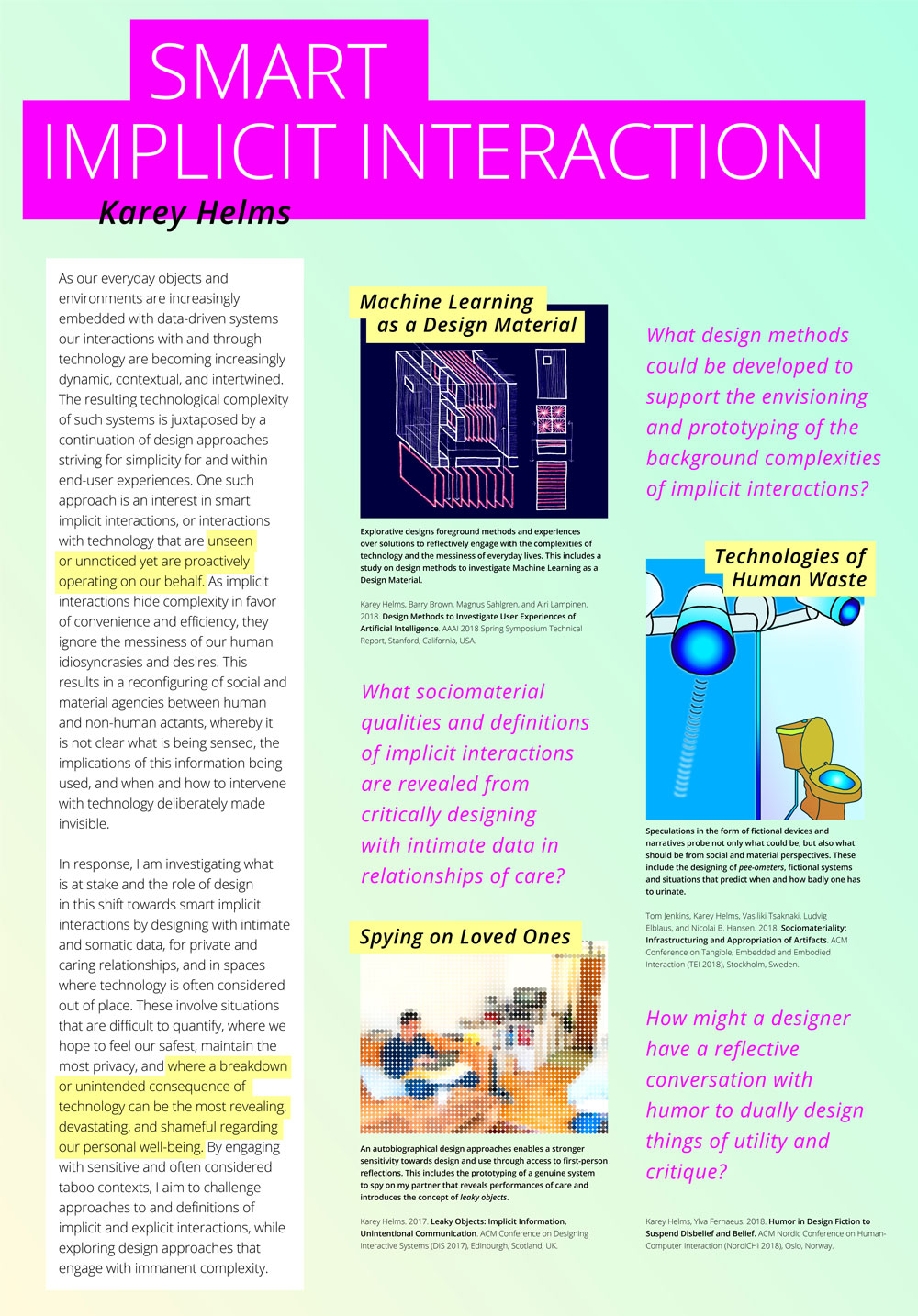

Today was our department’s annual PhD Supervisory Panel at KTH during which PhD students are given the opportunity to get feedback from senior researchers who act as “guest supervisors.” To prepare for my meeting with two Associate Professors I reworked my research abstract and research questions following my 30% seminar in October, during which Johan Redström from Umeå Institute of Design acted as my discussant. My goals in today’s supervisory panel were to get feedback on the new scope of my abstract and research questions relative to being only about 40% through my PhD (and I’m sure will continue to evolve), and identify important areas that I need to work on articulating to more firmly position my research in the context of how I am conceptually “furnishing” my design space. Considering I was presenting to senior researchers from different academic backgrounds than my own and each other, it was especially helpful to see within which aspect I felt misunderstood, i.e. where I need to sharpen my arguments. Below my poster summarizing my research thus far, followed by a few notes/reflections based on feedback received today, and a textual version of my abstract and research questions.

UID Wednesday Lecture 2018 – Crafting Humorous Fictions & Taboo Frictions

Yesterday I had the pleasure of spending the day at Umeå Institute of Design (where I did my MFA in Interaction Design). In the morning I spent a couple hours with upcoming IxD master thesis students to discuss my experience, and in the afternoon gave a talk titled “Crafting Humorous Fictions & Taboo Frictions” as part of the design school’s Wednesday lecture serious. Was great to be back, even if only for a day! Below is the abstract of my talk.

Talk abstract

Karey Helms is a PhD Student at KTH Royal Institute of Technology researching smart implicit interactions, those that are unseen or unnoticed yet proactively operate on our behalf. Her research through design approach includes speculative and autobiographical methods in which she designs humorous fictions and taboo frictions with intimate and somatic data to surface the social and societal implications of data-driven systems. These include the designing of fictional devices that predict when and how badly one has to urinate, and the prototyping of a genuine system to spy on her partner.

In this talk, she traces back her playful approach to fiction and friction to her master’s thesis in Interaction Design at UID and how she employed this approach while working in industry delivering actualized services within enterprise IoT prior to beginning her PhD. The aim of this talk is to advocate for humor in design and to craft experiences that disrupt and disturb to not only provoke others to think, but also yourself as a designer.

AAAI 2018 – Presentation & Slides

Last week I presented my paper Design Methods to Investigate User Experiences of Artificial Intelligence at the 2018 AAAI Spring Symposium on the User Experiences of Artificial Intelligence. The picture above was taken by a Google Clips camera, which was also one of the presented papers. Below are my slides, which contain supplementary images to my paper on the three design methods I have engaged with relative to the UX of AI. Another blog post will soon follow with reflections on other papers presented.

AAAI 2018 – Accepted Spring Symposia Papers

Two papers were accepted to the AAAI 2018 Spring Symposia: Design Methods to Investigate User Experiences of Artificial Intelligence for The UX of AI symposium and The Smart Data Layer for Artificial Intelligence for the Internet of Everything symposium. I’ll be presenting the former at Stanford at the end of March, bellow is the abstract.

Design Methods to Investigate User Experiences of Artificial Intelligence

This paper engages with the challenges of designing ‘implicit interaction’, systems (or system features) in which actions are not actively guided or chosen by users but instead come from inference driven system activity. We discuss the difficulty of designing for such systems and outline three Research through Design approaches we have engaged with – first, creating a design workbook for implicit interaction, second, a workshop on designing with data that subverted the usual relationship with data, and lastly, an exploration of how a computer science notion, ‘leaky abstraction’, could be in turn misinterpreted to imagine new system uses and activities. Together these design activities outline some inventive new ways of designing User Experiences of Artificial Intelligence.

PhD’ing – Writers’ Retreat, Project Offsite, Outdoors Research, and Making Preciousness

Today I saw a meme on Instagram which said, “We are now entering the third month of January.” I couldn’t relate more! And looking back over the past few weeks, cannot believe all that has already happened in 2018.

Writer’s Retreat

Following a paper deadline in early January, my department at KTH (Media Technology and Interaction Design) kicked off 2018 with a writers’ retreat. What happens at a writers’ retreat? We book a venue in the Stockholm archipelago for three days and two nights, and write. And sauna and winter swim, but mainly write. The primary purpose of the retreat is to provide time and space away from everyday academic duties, from teaching to admin responsibilities, in order to focus on increasing the quality and quantity of our writing output. During the three days, we follow an agile framework in which junior/senior pairs write in ~45 minute sprints and then provide ~15 minutes of feedback. In addition to intense writing blocks, lunches, dinners, and evening activities provide ample opportunities to better know our colleagues professionally and personally. Though equally as exhausting as the writing, this social time I find incredibly valuable in creating a continued collaborative culture at work.

During this year’s writing camp I started a paper on a Pee-ometer, a recent project by Master’s students that I proposed and supervised in which they prototyped a wearable device that predicts when a user has to pee to investigate Machine Learning as a design material.

Project Offsite

In mid January, the Smart Implicit Interaction project had a two day project offsite. As the project is composed of differing philosophical and methodological backgrounds – i.e. Artificial Intelligence, Social Sciences, and Interaction Design – the first day consisted of a beginners overview into reinforcement and representational learning in neural networks to introduce technical terminology and objectives. During the second day, all of the sub-projects presented their current status and goals for the year. I specifically presented two ongoing design projects, data-driven design methods and the Pee-ometer. In the former, I discussed early design activities and resulting concepts from investigating the implications of screenshots as a data source. In the latter, I discussed three high-level interests guiding future project directions, including Machine Learning as a design material, interactional loops, and critique and ethics. Overall, it was inspiring to share and strategize better collaborations while revisiting overarching project objectives.

Project Offsite

Last week continued January’s streak of out-of-office research activities and into the forest. To kick off an new outdoors project, myself and three senior researchers went on a mid-week day hike 30 minutes outside of Stockholm. Not only was I surprised at a Professor’s ability to make a fire in the snow, but the excursion was both refreshing and constructive. More in the coming months!

Making Preciousness

And last but definitely not least, friend and fellow PhD student Vasiliki successfully defended her thesis Making Preciousness: Interaction Design Through Studio Crafts. Her opponent Ron Wakkary gave a much deserved brilliant presentation of her work before lengthy discussions with him and the committee. Admittedly, it is selfishly bittersweet to see her finishing as she has been a tremendous support and inspiration during the first year of my own PhD.

PhD’ing – Taboo, Tinkle, Algorithms, and Home

For an autobiographical project building off Leaky Objects, in which I’m allowing myself to undertake a significantly slow design process, this week I read Geography of Home: Writings on Where We Live by Akiko Busch. The book is a collection of beautiful musings organized by room and touches upon notions of comfort, dichotomies between possessions and spaces, clutter, deeply personal rituals, thresholds, processions, and changing functionalities. So much I love in this book while mapping the geography of our tiny home which defies all traditional expectations and conditions. Last year before moving from London to Stockholm, we read The Life-Changing Magic of Tidying Up, an amusing read that we nevertheless abided by and asked everything we own, “Do you bring me joy?” Not only did we end up giving away bags of stuff, but it also prompted many meaningful discussions regarding and aligning views on possessions and a home, in addition to musings around if someday everything will be connected, will all our possessions? And why do we keep them, and do we deserve them if possession implies care, custody, and guardianship? More and more through many of my ongoing projects and initial concepts, I realize I’m interested in overlaps between privacy and care, and perhaps comfort too.

A project I recently started with MSc students is investigating predicting pee habits, which to many is a taboo topic due to the invasive nature of the proposed interaction and an aversion to recognizing private bodily functions. A subsequent discussion with my supervisor on researching taboo topics led to the reading of the paper Accountabilities of Presence: Reframing Location-Based Systems and the website Between the Bars: Human Stories from Prison. In the paper, the authors research paroled sex offenders who are tracked via GPS to explore the intersection between mobility, presence, and privacy; while the website offers a digital platform to share handwritten content from people in prison who do not have access to internet. In both cases, what could be considered extreme or fringe users are researched or designed for, and equally interesting for different reasons, they also serve as interesting case studies that either directly learn from or address the messy realities of society. One of my biggest peeves – designers don’t address the messiness of life nearly enough. So while pee might not be prison, it is definitely an everyday and occasionally very messy, mundane, and universal reality that should not be ignored as I believe there is much to be gained from deviating from designing only delightful experiences.

On a fun, related note, I recently found out that Engelbart conceived what he called the “tinkle toy”, a small waterwheel in a toilet bowl that would spin when pee was run over it, serving as a potty-training aid for boys as the interaction was designed to be an incentive to pee in the toilet. From Markoff’s What the Dormouse Said: How the Sixties Counterculture Shaped the Personal Computer Industry.

A week or two ago, it was difficult to miss Algorithms as culture: Some tactics for the ethnography of algorithmic systems on Twitter. Found it incredibly informative and a nice compliment to Dourish’s The Stuff of Bits: An Essay on the Materialities of Information, which I started last week for implicit interaction book club. The paper also led me to these slides The algorithm multiple, the algorithm material: Reconstructing Creative Practice – in which I was thrilled to see my former UVa architecture professor and brief employer Jason Johnson of Future Cities Lab mentioned.

Lastly, this morning I found this open source software by Rebecca Fiebrink for real-time, interactive Machine Learning that hopefully I can use with Arduino.

EuroIA 2017 – Smart Implicit Interactions

Two weeks ago I had a lovely time speaking about my current research interests and questions surrounding Smart Implicit Interactions with industry practitioners at EuroIA 2017 in Stockholm!

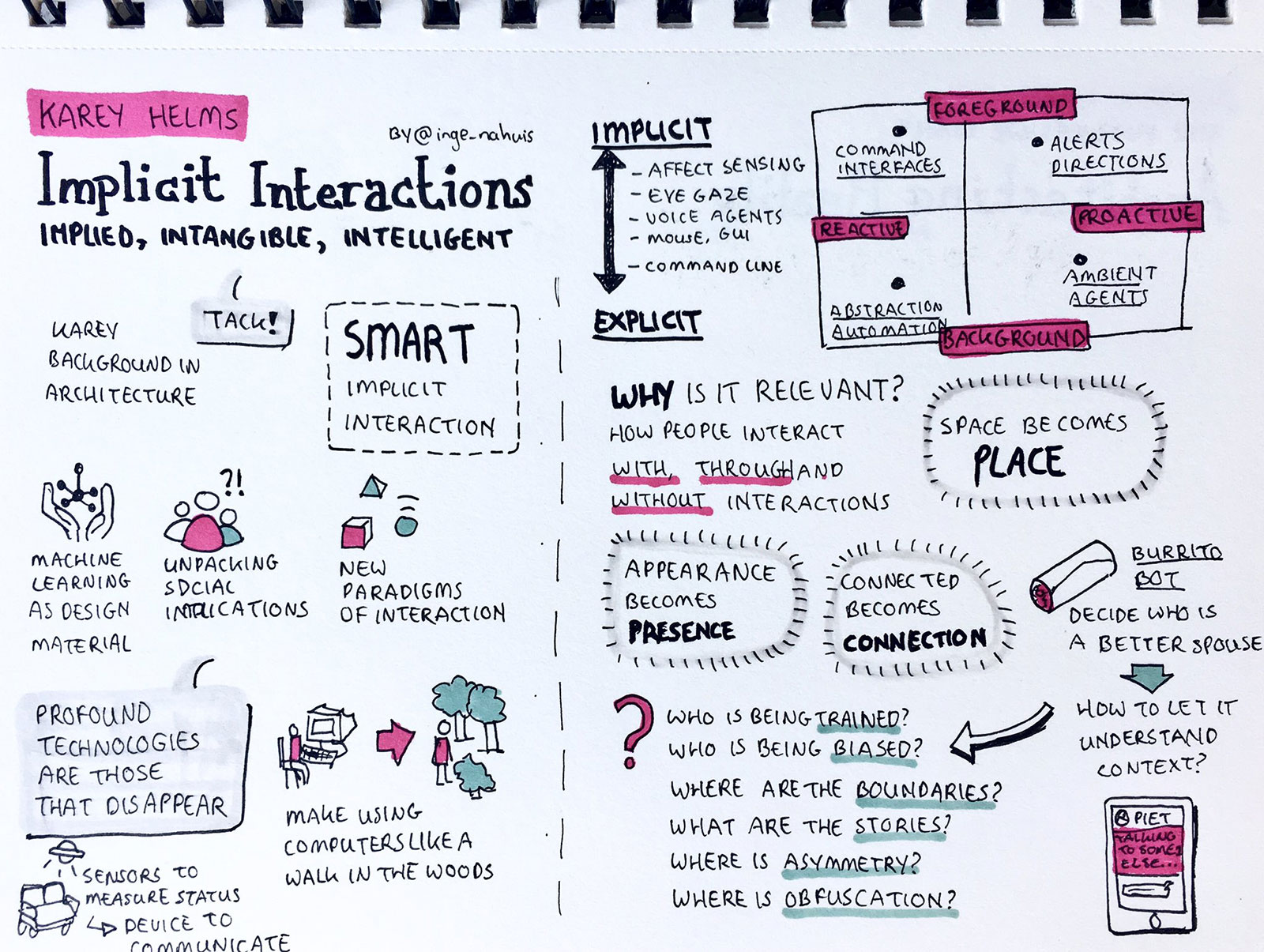

Thanks EuroIA for the INTENSE photo above and Inge Nahuis for the sketchnotes below!

DIS 2017 – Workshop Reflections and Design Fiction

As previously mentioned, I attended, and thoroughly enjoyed, the workshop at DIS17 on People, Personal Data and the Built Environment. Not only was the topic relevant to my project, interests, and background, but I also found the strict structure (and quick introductions) very effective and thus resulting in meaningful discussions at the end of the day.

The below image is a fraction of a future IoT system followed by a corresponding fictional narrative created with Albrecht Kurze.

Unfinished Business

2022 – five years after 2017 – a public space odyssey

It is the year 2022, five years after the government started to implement the dynamic waiting management system in public buildings. To simultaneously reduce waiting times while keeping visitors preoccupied, the system routes visitors on an adaptive, and often the least efficient, way through the building. As this has unsurprisingly resulted in many lost visitors, a place-based location-aware voice-controlled guiding and help-and-get-helped system was introduced, in which visitors can leave voice messages to aid other lost visitors.

Dave was born in 1950 and retired in 2017, the same year his wife died. Every time he enters public buildings, he is asked to confirm the usage and data processing terms of the building, as smart building are being classified as interactive data processing units by the 2019 extended GDPR. These temporary consents are based on minimum viable data and are thus only valid for a single visit as all data collected is automatically deleted or anonymized upon leaving.

Dave pretends to apply for a hunting license but actually just wants to hear his wife’s voice in a message she left in the help-and-get-helped system after the system’s implementation. As the system is gender intelligent, he needs a female to find his wife’s message. Furthermore, the message is not locationally linked nor directly addressable because of dynamic shuffling and the anonymization policy.

Claire was born in 2001 and has been applying for a family planning permit for the past five days. As the system is implicitly regulating family planning, prioritizing lonely widows and widowers for which Claire is not, it sends her on an impossible route. As she is not successful in her quest for a permit by the end of each day, the temporary data consent causes her to restart the whole process the following day, resulting in a never ending journey.

But this one special day Dave and Claire met, two lost visitors, trapped some way in and by the system. They decided to help each other and resolve their unfinished business.

EuroIA 2017 – Upcoming talk on Implicit Interactions

Very excited to be giving a 20 minute talk on September 30th at EuroIA in Stockholm on Implicit Interactions: Implied, Intangible and Intelligent! Below is my talk abstract.

As our physical and digital environments are ubiquitously embedded with intelligence, our interactions with technology are becoming increasingly dynamic, contextual and intangible. This transformation more importantly signifies a shift from explicit to implicit interactions. Explicit interactions contain information that demands our attention for direct engagement or manipulation. For example, a physical door with a ‘push’ sign clearly describes the required action for a person to enter a space. In contrast, implicit interactions rely on peripheral information to seamlessly behave in the background. For example, a physical door with motion sensors that automatically opens as a person approaches, predicting intent and appropriately responding without explicit contact or communication.

As ambient agents, intelligent assistants and proactive bots drive this shift from explicit to implicit interactions in our information spaces, what are the implications for everyday user experiences? And how do we architect dynamic, personal information in shared, phygital environments?

This talk aims to answer the above questions by first introducing an overview of explicit and implicit interactions in our mundane physical and digital environments. Then we will examine case studies in which unintended and unwanted consequences occur, revealing complex design challenges. Finally, we will conclude with example projects that explore a choreography between explicit and implicit interactions, and the resulting insights into architecting implied, intangible and intelligent information.

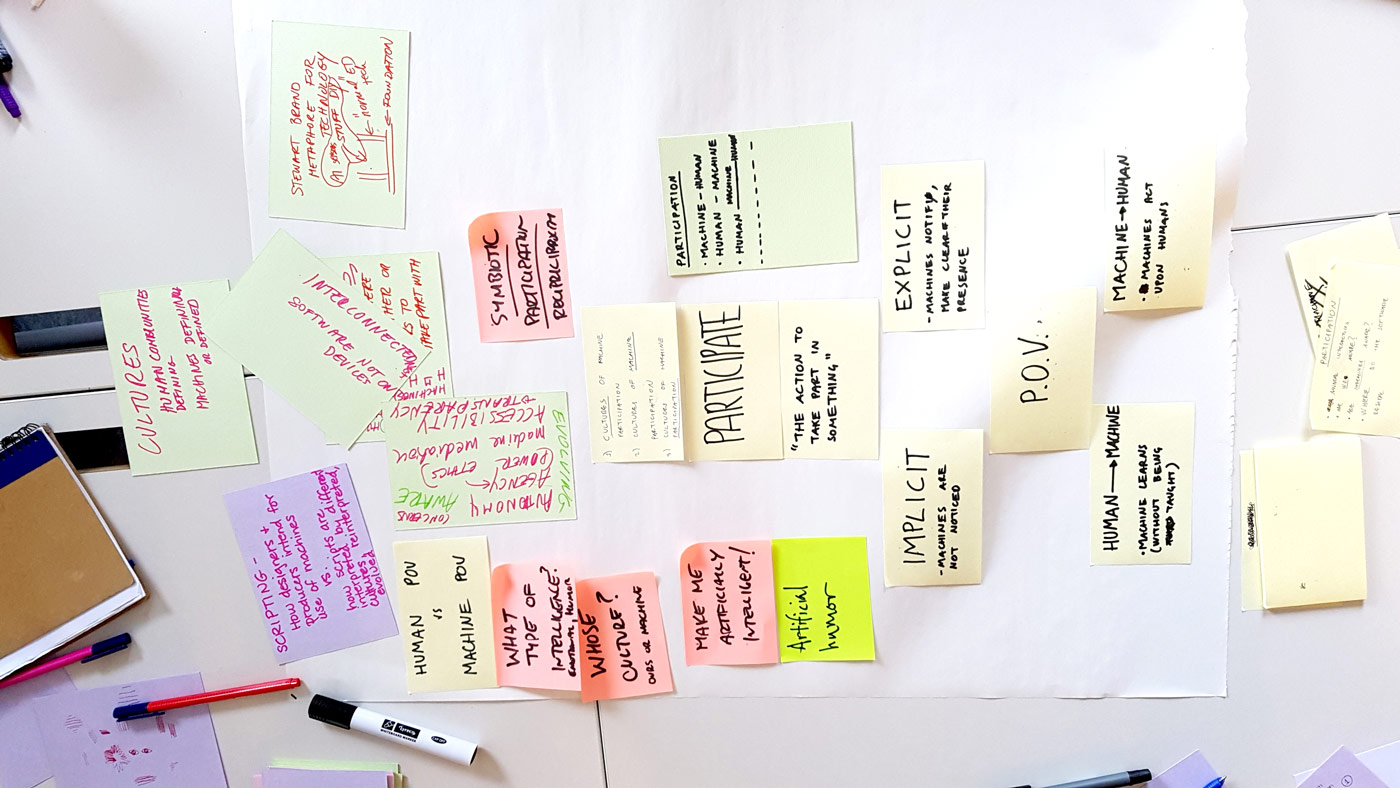

Workshop – The Cultures of Machine Participation

Earlier this week I attended a workshop held by the research group for the design of information systems (DESIGN) at the University of Oslo (UiO) on The Cultures of Machine Participation.

Design Brief – Manuals of Misuse

Below is the design brief that I wrote for the master’s level course in which I am a Teaching Assistant. The course is Interaction Design as a Reflective Practice.

Design Brief

Technological forecasts often predict that someday in the future everything will be connected. What is everything? And why is connected so often synonymous with tangible interactions being transferred to mobile applications or voice-based assistants? Additionally, these solutions primarily focus on efficiency and automation, signaling a shift from engagement to a frictionless relationship with technology. Is this shift necessary, or do our physical things have overlooked abilities, hidden meanings or magical uses?

The project Manuals of Misuse investigates these questions by examining our everyday interactions with faceless objects and reimagining how they might be misused to playfully control or meaningfully communicate with other things, people or places.

You will do this by:

- Reflecting upon the intended interactions of an everyday object of your choice

What is the essential use of the object and the ideal scenarios of interactions? - Communicating how the object is meant to be used, interacted with and part of an ecosystem

What information is needed to communicate to a novice user, product partner, or manufacturer? - Investigating how the object is or could be misused – used for purposes not intended by the designer

What are the object’s properties and/or affordances that result in these misuses? - Generating novel design concepts of playful, meaningful or magical misuse

What modifications, interactions and technologies are required to make this possible? - Creating a stand-alone, multimedia experience that conveys your reimagined misuse

What is needed to communicate the essence of your concept and prompt further investigation?

Objectives

- Reflection: Upon our mundane, micro interactions with everyday objects. How to we use things and why do we use, or misuse, them? What can we learn from misuse and how does it inform how open or closed we design for appropriation?

- Communication: Of design intent, technical specifications and user experience to diverse audiences through various mediums.

- Playfulness: For engaged action and meaningful interaction.

- Documentation: For reflection and communication.

MeetUp – Artificial Intelligence, Machine Learning & Bots

Back in June I attended Practical Introduction into Artificial Intelligence by ASI Data Science as part of London Technology Week. The event was very well structured, and more importantly, perfectly distilled complex theories and processes into a digestible format for a novice like myself. I left feeling like an expert, and was able to confidently re-articulate the evening to others, which I think is very much a sign of a well run event and of course, great instructors. Moreover, as I’m navigating the world of artificial intelligence and machine learning in relation to my role and interests as an Interaction Designer, I’m being intentionally thoughtful regarding the Pareto Principle – I don’t actually need to be an expert, but do want a solid 20% foundational knowledge base.

Anyhow, the evening began with a history of artificial intelligence and the corresponding theories of influential scientists on the topic before launching into a hands-on session in which participants built our own handwriting recognition engine. Key takeaways included a clearer understanding of the relationship between artificial intelligence verse machine learning, a new comprehension of artificial neural networks (see slide below), and insights into what is and is not currently possible relative to real world applications.

Fast forward to this past Tuesday, when I attended a MeetUp by Udacity at Google Campus London on Machine Learning and Bots by Lilian Kasem. Very different content and structure but equally insightful. Lilian’s talk was centered around Bots specifically – from the definition of a bot, to a live coding demo of the creation of a bot in Microsoft Bot Framework, and best bot practices – all while weaving in the integration of machine learning if it adds value (I also appreciate her stress on the if). Her resources in the image below:

In addition to the obvious relevance of these two events to current interaction design trends, they are helping me formulate next steps for two of my current projects, one personal and one professional.

The personal project – Burrito, a marriage bot that analyzes messages between my husband and me to determine who is a better spouse – is currently being refactored into a formal bot for Telegram. Lilian’s talk in particular was very helpful as my project focus as transitioned from theoretical to technical as I am now seeking to create a higher fidelity product and implement better, if not best, programming practices.

The professional project, for which I will intentionally be quite vague, investigates the impact of implicit data on enterprise organizational and technical systems, and in particular, the transition from frictionless to exception based workflows. As an Interaction Designer, I firmly believe it is important to not only empathize with humans, but also technology and its corresponding data because the concept of user – who, what, or where – is increasingly being blurred. Therefore, ASI’s introduction into AI was particular helpful in how I understand, design for, and implement data into my professional practice.

Long story short – two great events! In the coming weeks, I am to write a followup post regarding my technical developments in regards to Burrito bot, but also an online course I began this week to formalize my programming skills relative the Internet of Things.

IxDA London – The Uncanny Valley & Subconscious Biases of Conversational UI

The theme of IxDA London’s June event was Algorithms, Machine Learning, AI and us designers – an evening of great discussions that prompted me to dig up reading material on The Uncanny Valley and Subconscious Biases. Both these topics were strongly present, the former directly and the latter indirectly, in Ed and John’s presentation on designing for IBM Watson. They discussed the ‘Uncanny Valley of Emotion’ as a third line on the curve in addition to ‘still’ and ‘moving’ in the traditional model of the uncanny valley. While I understand their intent in creating a third category – accounting for the invisible systems, agents, and interactions not visible or physically accessible – in retrospect I disagree with the characterization. Emotion, or lack of, can by explicitly betrayed by movement. From my understanding, subtle asynchronous or unnatural movements directly related to emotional responses expected by humans are a key ingredient in the Uncanny Valley. Therefore, I would rename the ’emotion’ curve suggested by the Watson team to ‘implicit,’ thereby retaining emotion as a criteria for both explicit (still and moving) and implicit interactions.

The second subtopic, subconscious biases, greatly concerns me. A recent article in the New York Times – Artificial Intelligence’s White Guy Problem – sums it up perfectly. As designers, how do we build into our processes accountability for subconscious (and conscious) biases relative to algorithms, machine learning, and conversational interfaces? I don’t have an answer but I would like to find one!

Relevant links and resources:

The Uncanny Valley

Uncanny valley: why we find human-like robots and dolls so creepy

Navigating a social world with robot partners: A quantitative cartography of the Uncanny Valley

The Uncanny Wall

Artificial Intelligence’s White Guy Problem