How can we exhibit emergent musical patterns through simple instrumental interactions?

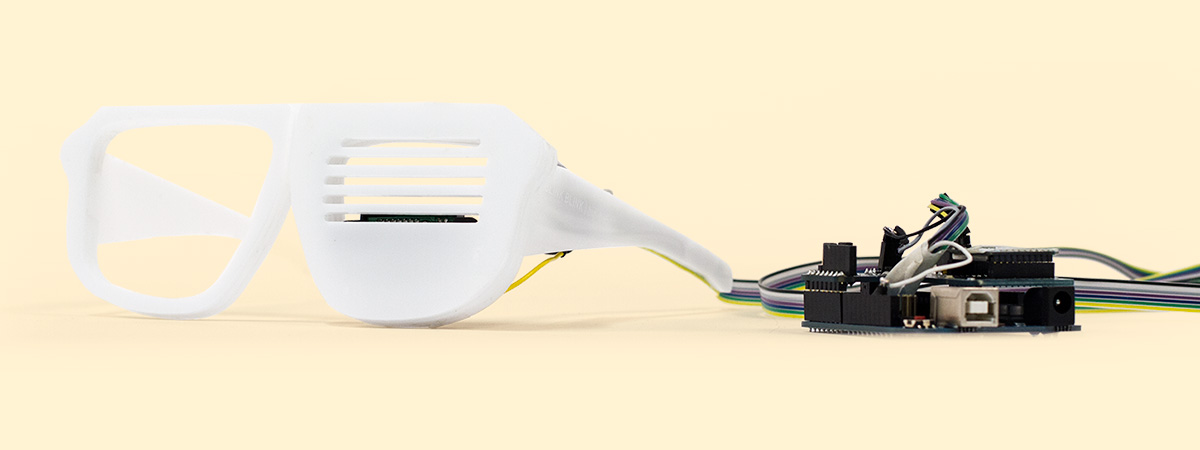

Blink Blink by Bip Bop is an emergent musical instrument rooted in the subtle communication of facial expressions. Whether subconsciously or with full intent, our faces are a powerful tool of communication. A wink, slight smile, or lift of an eye brow convey many messages in response to or directed at others. Using a proximity sensor to detect blinks and an accelerometer to read head position, Blink Blink transforms this silent dialogue into an interactive rhythm.

Improvisation Interfaces was a one week project as part of the larger Experience Prototyping course. Within it we were asked to design a musical instrument that could be part of a larger musical system in which each unique instrument has both the ability to inform and be informed by others; thereby exhibiting emergent properties through the complex musical patterns created by the simple instrumental interactions.

Blink Blink by Bip Bop uses a proximity sensor to detect eye blinks, pre-calibrated for each user, and a 3-axis accelerometer to detect head movements. The values are first collected within Arduino before being sent to Processing via serial communication. The translation from sensor values to sound was accomplished using the free Processing Minim library.

During our process, we experimented with a variety of sensors and technologies for blink detection – including IR diodes, NeuroSky MindWave headset, and camera color detection. Both the MindWave and camera solutions functioned successfully but we decided the MindWave technology limited our physical prototyping due to its refined development, and camera color detection required more calibration than the proximity sensor for each individual use.

There are many ways in which Blink Blink by Bip Bop could be influenced by other instruments in the class – for example, lights that cause the wearer to blink or actions that cause the wearer to move or tilt his head. Later in the week we explored integrating visual outputs in conjunction with the music created. We attached a RGB NeoPixel Ring to the laser cut frames and explored color manipulation based on the sensor values. Light and color was chosen as an output sources since other instruments were exploring reacting to external light and or color information.

Umeå Institute of Design (Experience Prototyping – Improvisation Interfaces)

Bart Hengeveld & Mathias Funk (tutors)

Team

Jessica Williams & Jacob Cyriac

Roles

Concept, prototyping and final video was a full team collaboration

Skills

Processing, Arduino, proximity sensor, accelerometer, NeoPixel Ring, After Effects, Premiere